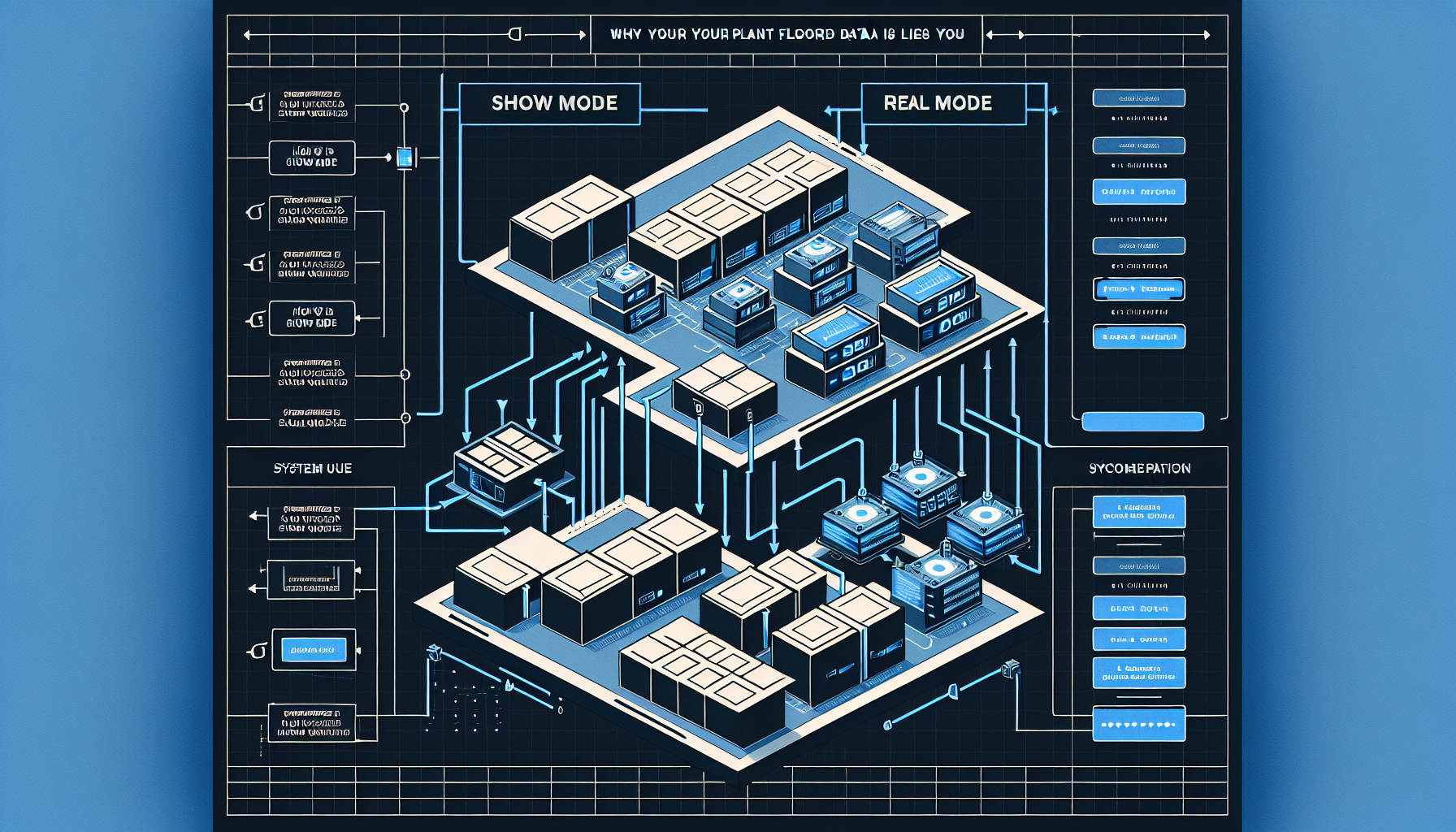

Show Mode vs. Real Mode: Why Your Plant Floor Data Lies to You

Your operations director is visiting next week. The plant scrambles. Overtime gets quietly approved. The line that always jams gets extra attention. The spreadsheet with manual workarounds gets hidden. Everyone knows what to say when asked how things are running.

By the time leadership arrives, the plant looks flawless. KPIs are green. The tour goes smoothly. Everyone says the right things. Leadership leaves satisfied.

But here’s the problem: your data systems were watching the whole time. And now they think that’s how your plant actually runs.

The Cost of Performance

Manufacturing operations don’t pause for visitors. When a plant enters “show mode,” the pressure doesn’t replace the work. It adds to it. Employees work overtime to prepare. Managers script responses. Workarounds get temporarily disabled. Problems get hidden.

The immediate cost is stress and extra hours. The long-term cost is worse: corrupted data.

Your ERP system logs those overtime hours as “normal operations.” Your quality metrics capture artificially low defect rates. Your throughput dashboards record production speeds that only happen when everyone’s watching. Your maintenance logs show equipment running smoothly because technicians pre-fixed everything the night before.

Then leadership uses that data to set next quarter’s targets. Engineering uses it to model capacity. Finance uses it to forecast costs. Product teams use it to promise delivery timelines.

All based on theater.

Why “Real Mode” Matters for Software Decisions

If you’re building or buying operational software based on how your plant runs during audits and leadership visits, you’re optimizing for the wrong reality.

Here’s what happens:

- Your dashboards lie. KPIs that look great during show mode create false confidence. When real operations resume, the numbers drop, and no one knows why.

- Your forecasts fail. Capacity models built on show-mode throughput over-promise what the plant can actually deliver.

- Your automation breaks. If you’re implementing AI, machine learning, or autonomous systems, they’re learning from corrupted training data. They’ll optimize for conditions that don’t exist.

- Your integrations miss the workarounds. When you map “official” processes for ERP integrations or API connections, you miss the 14 spreadsheets your team actually uses to get work done.

Real mode is messy. Equipment jams. People improvise. Critical logic lives in someone’s head. Spreadsheets bridge gaps between systems. Manual steps fill holes the ERP never addressed.

That’s the reality your software needs to serve.

How Show Mode Creeps Into Data Systems

It’s not always leadership visits. Show mode happens whenever the plant feels watched:

| Trigger | Show Mode Response | Data Impact |

|---|---|---|

| Executive plant tour | Pre-fix problems, script answers | Equipment uptime appears higher than normal |

| Monthly KPI review | Rush orders, manual data cleanup | Quality and throughput spike artificially |

| External audit | Disable workarounds, follow official procedures | Process compliance looks perfect, throughput drops |

| New system implementation | Enter clean data, skip edge cases | System appears to work until real operations resume |

Every time this happens, your data drifts further from operational truth.

A recent IndustryWeek article highlighted this problem: When employees feel pressure to make everything look perfect for leadership, they create a “show mode” culture where honesty hides and operational risk festers in silence. The same dynamic corrupts the data your systems depend on.

Building Systems That Match Reality

At Jetpack Labs, we start every engagement by understanding how work actually happens, not how it’s supposed to happen according to the process map. Here’s how we approach it:

1. Observe Real Mode Before You Build

Spend time on the floor during normal operations. Not during a leadership visit. Not during an audit. On a random Tuesday at 2 PM when no one’s expecting you.

Watch what actually happens:

- Where do workarounds live?

- What gets logged vs. what gets remembered?

- Which systems talk to each other and which require manual bridges?

- When does the official process break down and improvisation take over?

That’s where your software needs to start.

2. Build Trust Before You Build Software

If your team thinks honest feedback will get them in trouble, they’ll perform for you too. The system you build will reflect a fantasy.

Create space for truth:

- “I’m not here to see a perfect plant. I’m here to understand how things actually run."

- "If something isn’t working well, I want to understand it so we can improve it."

- "Show me the spreadsheets you actually use, not the ones in the official documentation.”

When people feel safe being honest, you get data that reflects reality.

3. Start With the Constraint, Not the Wishlist

Most software projects begin with a feature list based on how operations should work. That’s backward.

Instead, identify the constraint costing you the most right now. Maybe it’s the 14 spreadsheets your production team emails around every Monday. Maybe it’s tribal knowledge trapped in one person’s head. Maybe it’s the 30-minute manual reconciliation that happens every shift change.

Fix that constraint in the open. Measure the impact in real operations. Then decide what’s next.

This approach delivers value before expanding scope. And because you’re solving for real mode, the solution actually gets used.

4. Integrate the Workarounds, Don’t Ignore Them

Workarounds exist because your current systems have gaps. When you’re building or modernizing operational software, those workarounds are signal, not noise.

A spreadsheet bridging two systems? That’s a missing integration. Manual data entry after an automated process? That’s where automation failed. Tribal knowledge about which customers need special handling? That’s business logic that should live in the system.

We don’t tell clients to “stop using spreadsheets.” We ask what problem the spreadsheet is solving, then build software that solves it better.

The Autonomy Problem

If your data reflects show mode instead of real mode, autonomous manufacturing systems will fail spectacularly.

AI and machine learning systems learn from historical data. If that data captures artificial performance spikes during audits, overtime-fueled throughput during leadership visits, and temporarily disabled workarounds during implementations, your autonomous systems will optimize for conditions that don’t exist.

Then, when they encounter real mode operations, they’ll make bad decisions based on good math applied to corrupted data.

Before you invest in autonomous manufacturing, data fabric, or AI orchestration, make sure your data foundation reflects operational truth. Otherwise, you’re building on sand.

What This Looks Like in Practice

We worked with a logistics operation where reported on-time delivery was 94%. Leadership used that number to promise customers same-day service expansion.

But when we spent time in real mode, we found the number was misleading. The metric only captured “officially logged” deliveries. Drivers were manually tracking 30-40% of routes in personal notebooks because the system couldn’t handle last-minute changes, multi-stop deliveries, or customer gate access delays.

Real on-time performance? Closer to 78%.

We didn’t build a system to make the official number look better. We built software that captured the reality drivers were already tracking manually. That gave leadership visibility into what was actually happening, which let them fix real bottlenecks instead of optimizing for fake metrics.

Six months later, real on-time delivery hit 91%. And leadership could trust the number.

Building Software for How Work Actually Happens

Jetpack Labs specializes in operational software for manufacturing, logistics, and industrial operations. We start by understanding your real constraints, not your aspirational roadmaps. Then we build systems that match reality and deliver measurable value before expanding scope.

Work With Us

Moving Forward

If your plant runs differently when leadership’s watching, your data isn’t telling you the truth. And if your data isn’t true, your software decisions won’t be either.

The fix isn’t complicated, but it requires honesty:

- Observe operations during real mode, not show mode

- Build trust so your team feels safe being honest

- Start with the constraint costing you the most right now

- Integrate the workarounds instead of pretending they don’t exist

- Measure impact in production before expanding scope

That’s how you build software that matches how work actually happens. Not how it looks during audits.

And when your data reflects reality, you can finally trust the decisions you’re making with it.

More of Our Starship Stories

Building a SaaS App with Claude Code

March 24, 2025

2025 Guide: Agentic Systems with AI Augmentation

September 18, 2025

Minimum Lovable Product, MLP vs MVP

October 24, 2024